The Linux Desktop Distribution of the Future Part 3

Category: Conceptual Design

This article is part of a four part series intended to provide some thought into how a future Linux Desktop might work. It is not intended to be a comprehensive essay, although all the concepts presented here are considered "doable" by the author.

Part 1: Linux and the Desktop Today

Part 2: Applications

Part 3: File Management

Part 4: The Desktop Interface

Part 3: File Management

As computer systems grow in complexity, they tend to drag that complexity into the user's filesystem, thus confusing what files are important and what files are only for application data. This complexity introduces confusion for the user as to where his files should be placed. In more extreme cases, it can even cause a user to lose where his documents are placed! In this episode we'll look at ways in which a Linux system can further reduce complexity in these areas.

Database File Systems

If you haven't read my previous article on Database File Systems, I highly recommend that you do so now.

The key to detangling the complexity of modern file systems is to separate system files from documents. Existing systems have attempted to solve this by encouraging users to place documents in their home directory, but this can create more problems as the user interface hooks into special directories. Under existing Linux interfaces, users may not even be able to access files on their desktop without using the graphical interface!

In the previous episode we reduced the complexity of applications by effectively making them into documents. But that still leaves the user with some confusion as to how to organize their documents and applications vs. their system files. To solve this, we need to split the file system into two areas:

Core System Libraries

One of the most important things that a desktop user needs is to get out of the business of maintaining system files. Such maintenance was always problematic for Windows users and even gained the title of "DLL Hell". Microsoft eventually solved this issue through several OS changes, not the least of which was encouraging application writers to keep their DLLs to their own program directories. Most Windows programs today install into a folder in the "Program Files" directory with no extraneous files in the System32 directory.

Linux, on the other hand, took the approach of package management. No real standard existed for the base system, but rather the core libraries could be updated at will. This lead to the situation where a given distro version may mean a different set of available APIs under each installation. Standards such as LSB have been proposed to help alleviate this situation, but such efforts have mostly focused on providing a minimum of low level APIs. What is necessary in a Desktop focused distro is that the developer be able to count on a specific set of APIs for a given system level.

For example, let's create a mythical desktop Linux OS called DeskLin. For version 5.0, DeskLin publishes APIs for GTK 2.1 and QT 3.2. Thus all programs based on GTK 2.1 or lower and Q 3.2 and lower should work. If a developer wants to create a program that uses the FLTK toolkit instead, he'll need to package the FLTK shared objects in the lib folder of his application bundle. Now let's say that DeskLin Inc. notices a large number of applications using the FLTK APIs. To reduce waste, they may chose to include FLTK as a core API in DeskLin 6.0. This then allows software application developers to take advantage of these APIs as long as they target version 6.0 and up of the DeskLin operating system distribution. The application developer, however, can still target version 5.0 by including the FLTK libraries.

The end result of this process is that control of the system APIs is taken away from the user. While many Linux purists would argue against such a step, it's important to note that I am not advocating taking this step for all distributions. In workstation and server environments it can be critically important that the user maintain complete control over his system. Only Desktop-oriented distributions that are looking to target home users should break off and take these steps.

Of course, security updates will still be an issue. As a result, it makes sense for a Desktop distribution to carry an installer mechanism such as Autopackage. This installer mechanism would allow for patches to be applied to the system quickly and easily. If possible, such patches should be automated. Beyond that, the core libraries should be hidden from the user and made read-only.

Documents

Differently from system libraries, users DO want to manage their documents and are always happy when they are given more power to do so. The best solution for users is to move their documents and only their documents into a database file system. In many other OSes this might be a problem, as there only exists one filesystem tree. In Linux we can get away with much more.

Under Linux we can create a new VFS module that handles a DBFS partition independent from the system files partition. The DBFS can then be seamlessly integrated by mounting it onto a standardized subdirectory such as /Documents. To a terminal and classic Linux programs, the DBFS Labels would look like normal directories. Files outside of the /Documents folder would be non-writable for normal users. Queries to the file system can be performed through a special /proc or /dev interface, allowing the Desktop to quickly search for the exact files the user is looking for.

This arrangement does pose a few problems, however. In a database file system, it is possible for the same file name to occur more than once. Possibly even under the same label. As a result, it is very important for programs to start using the INode number for the file instead of the abstract path. Two solutions immediately come to mind for dealing with "Classical" software programs:

Configuration Files

The one type of file that I haven't yet addressed is program configuration files. The reason for this is that I currently have no "good" place for them to go. The Windows solution of using a central registry is certainly not a bad one (although definitely not a good implementation), but adds one more complex abstraction for the user to deal with. In addition, it is far less feasible to force Linux programs to make the switch to a registry solution than it was for Windows programs. (Central control does have its advantages.)

The best solution I can come up with at the moment is to hook into the Unix "standard" for configuration files in order to create a virtual registry in the file system. The idea is as follows:

When the DBFS detects a file or directory created with the "." prefix (a naming convention that hides a file in Unix), it immediately traces back the creating program to its on-disk disk image. Instead of creating the file, the DBFS makes it a binary meta-data attachment to the program image. The resulting psuedo-file is then indexed by the DBFS so that a regedit-like management program can quickly retrieve a list of all programs with such configuration files.

The downsides to this scheme are as follows:

DBFS Structure

While the technical details of how the DBFS stores files and meta-data is for the most part irrelevant, I'm going to quickly go over the structure to eliminate questions concerning what a DBFS can and can't do.

A DBFS as envisioned in this article would forgo the traditional storage of INodes and Directories. Instead, the data about the filesystem would look more like an SQL database. An entry would exist in the database for each file on disk, with keyed linkages to meta-data. The meta-data list would be like a table with three columns: The name of the meta-data, the type of the meta-data, and a link to the value in another table. Tables would exist for String, Integer (64 bit?), and Data Block types.

The Data Block type would be a table that would hold a list of file system blocks used by the specific piece of meta-data. All files would have a piece of meta-data of this type that would identify the file contents. (Likely named something witty like "data".) Strictly speaking though, Data Block meta-data other than the file contents may be attached. For example, the application icon may be stored in a meta-data value called "icon", or a thumbnail of a photograph may be stored under "thumbnail". In fact, the potential for storing pre-calculated binary meta-data is limited only by disk space and your imagination. Even plain old text can easily be extracted from meta-data already in a file, and stored in the file system for easy access.

Each of the "tables" in the DBFS would be appropriately indexed to provide for the fastest lookup times possible. It's likely that such indexes might also be created for the contents of a file. These indexes would be what would make fast file searches/queries possible.

Tune in next week for the final installment, where I tie all of these features together into an easy to use interface!

Part 4: The Desktop Interface

Links:

LSB

Autopackage

This article is part of a four part series intended to provide some thought into how a future Linux Desktop might work. It is not intended to be a comprehensive essay, although all the concepts presented here are considered "doable" by the author.

Part 1: Linux and the Desktop Today

Part 2: Applications

Part 3: File Management

Part 4: The Desktop Interface

Part 3: File Management

As computer systems grow in complexity, they tend to drag that complexity into the user's filesystem, thus confusing what files are important and what files are only for application data. This complexity introduces confusion for the user as to where his files should be placed. In more extreme cases, it can even cause a user to lose where his documents are placed! In this episode we'll look at ways in which a Linux system can further reduce complexity in these areas.

Database File Systems

If you haven't read my previous article on Database File Systems, I highly recommend that you do so now.

The key to detangling the complexity of modern file systems is to separate system files from documents. Existing systems have attempted to solve this by encouraging users to place documents in their home directory, but this can create more problems as the user interface hooks into special directories. Under existing Linux interfaces, users may not even be able to access files on their desktop without using the graphical interface!

In the previous episode we reduced the complexity of applications by effectively making them into documents. But that still leaves the user with some confusion as to how to organize their documents and applications vs. their system files. To solve this, we need to split the file system into two areas:

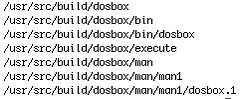

- A partition for the core system libraries, root files, and /usr files.

- A DBFS partition for the user's applications and documents.

Core System Libraries

One of the most important things that a desktop user needs is to get out of the business of maintaining system files. Such maintenance was always problematic for Windows users and even gained the title of "DLL Hell". Microsoft eventually solved this issue through several OS changes, not the least of which was encouraging application writers to keep their DLLs to their own program directories. Most Windows programs today install into a folder in the "Program Files" directory with no extraneous files in the System32 directory.

Linux, on the other hand, took the approach of package management. No real standard existed for the base system, but rather the core libraries could be updated at will. This lead to the situation where a given distro version may mean a different set of available APIs under each installation. Standards such as LSB have been proposed to help alleviate this situation, but such efforts have mostly focused on providing a minimum of low level APIs. What is necessary in a Desktop focused distro is that the developer be able to count on a specific set of APIs for a given system level.

For example, let's create a mythical desktop Linux OS called DeskLin. For version 5.0, DeskLin publishes APIs for GTK 2.1 and QT 3.2. Thus all programs based on GTK 2.1 or lower and Q 3.2 and lower should work. If a developer wants to create a program that uses the FLTK toolkit instead, he'll need to package the FLTK shared objects in the lib folder of his application bundle. Now let's say that DeskLin Inc. notices a large number of applications using the FLTK APIs. To reduce waste, they may chose to include FLTK as a core API in DeskLin 6.0. This then allows software application developers to take advantage of these APIs as long as they target version 6.0 and up of the DeskLin operating system distribution. The application developer, however, can still target version 5.0 by including the FLTK libraries.

The end result of this process is that control of the system APIs is taken away from the user. While many Linux purists would argue against such a step, it's important to note that I am not advocating taking this step for all distributions. In workstation and server environments it can be critically important that the user maintain complete control over his system. Only Desktop-oriented distributions that are looking to target home users should break off and take these steps.

Of course, security updates will still be an issue. As a result, it makes sense for a Desktop distribution to carry an installer mechanism such as Autopackage. This installer mechanism would allow for patches to be applied to the system quickly and easily. If possible, such patches should be automated. Beyond that, the core libraries should be hidden from the user and made read-only.

Documents

Differently from system libraries, users DO want to manage their documents and are always happy when they are given more power to do so. The best solution for users is to move their documents and only their documents into a database file system. In many other OSes this might be a problem, as there only exists one filesystem tree. In Linux we can get away with much more.

Under Linux we can create a new VFS module that handles a DBFS partition independent from the system files partition. The DBFS can then be seamlessly integrated by mounting it onto a standardized subdirectory such as /Documents. To a terminal and classic Linux programs, the DBFS Labels would look like normal directories. Files outside of the /Documents folder would be non-writable for normal users. Queries to the file system can be performed through a special /proc or /dev interface, allowing the Desktop to quickly search for the exact files the user is looking for.

This arrangement does pose a few problems, however. In a database file system, it is possible for the same file name to occur more than once. Possibly even under the same label. As a result, it is very important for programs to start using the INode number for the file instead of the abstract path. Two solutions immediately come to mind for dealing with "Classical" software programs:

- Allow for the final component of the path to be the INode number. This could be achieved with special file names such as "#1234" or "@1234". Such names are not normally used in an everyday Unix system, and are thus unlikely to be invoked by accident. These names can be passed to existing software programs to ensure that the correct file is selected. There is still an issue if the program displays the filename, however.

- Take a page from the Windows VFAT scheme and display duplicates under the same label with special filename extensions. For example, two files named "Bill.doc" could become "Bill #1.doc" and "Bill #2.doc". The only downside is that the user may become confused at the numbering scheme. Especially if he attempts to rename the file to include the special addition.

Configuration Files

The one type of file that I haven't yet addressed is program configuration files. The reason for this is that I currently have no "good" place for them to go. The Windows solution of using a central registry is certainly not a bad one (although definitely not a good implementation), but adds one more complex abstraction for the user to deal with. In addition, it is far less feasible to force Linux programs to make the switch to a registry solution than it was for Windows programs. (Central control does have its advantages.)

The best solution I can come up with at the moment is to hook into the Unix "standard" for configuration files in order to create a virtual registry in the file system. The idea is as follows:

When the DBFS detects a file or directory created with the "." prefix (a naming convention that hides a file in Unix), it immediately traces back the creating program to its on-disk disk image. Instead of creating the file, the DBFS makes it a binary meta-data attachment to the program image. The resulting psuedo-file is then indexed by the DBFS so that a regedit-like management program can quickly retrieve a list of all programs with such configuration files.

The downsides to this scheme are as follows:

- The application settings are permanently attached to the program. If the software application disk image is deleted, the settings will go with it. Many users would see this as an advantage, but it does depend on the program. Some larger programs are removed and later reinstalled by users due to disk space considerations. Gaming users in particular might not be happy if their Doom III saved game was lost. (Although the obvious solution to that situation is to encourage the vendor to change the saved game to be a regular document that is associated with the application.)

- Inter-program communication through configuration files is made more difficult. For example, Opera would have to support the necessary DBFS APIs to directly access the meta-data under which pre-existing bookmarks are stored in FireFox. In a traditional Unix system, Opera could just open the "."-prefixed sub-directory in the user's home folder.

DBFS Structure

While the technical details of how the DBFS stores files and meta-data is for the most part irrelevant, I'm going to quickly go over the structure to eliminate questions concerning what a DBFS can and can't do.

A DBFS as envisioned in this article would forgo the traditional storage of INodes and Directories. Instead, the data about the filesystem would look more like an SQL database. An entry would exist in the database for each file on disk, with keyed linkages to meta-data. The meta-data list would be like a table with three columns: The name of the meta-data, the type of the meta-data, and a link to the value in another table. Tables would exist for String, Integer (64 bit?), and Data Block types.

The Data Block type would be a table that would hold a list of file system blocks used by the specific piece of meta-data. All files would have a piece of meta-data of this type that would identify the file contents. (Likely named something witty like "data".) Strictly speaking though, Data Block meta-data other than the file contents may be attached. For example, the application icon may be stored in a meta-data value called "icon", or a thumbnail of a photograph may be stored under "thumbnail". In fact, the potential for storing pre-calculated binary meta-data is limited only by disk space and your imagination. Even plain old text can easily be extracted from meta-data already in a file, and stored in the file system for easy access.

Each of the "tables" in the DBFS would be appropriately indexed to provide for the fastest lookup times possible. It's likely that such indexes might also be created for the contents of a file. These indexes would be what would make fast file searches/queries possible.

Tune in next week for the final installment, where I tie all of these features together into an easy to use interface!

Part 4: The Desktop Interface

Links:

LSB

Autopackage